The Semiotic-Reflexive Transformer: A Neural Architecture for Detecting and Modulating Meaning Divergence Across Interpretive Communities

March 5, 2026

Abstract

Large language models treat meaning as stable. A token maps to a vector, context refines it, and the model proceeds as though semantic content were a fixed quantity transmitted between interlocutors. This assumption is false. Meaning drifts, forks, and collapses across interpretive communities in ways that current architectures cannot detect, let alone represent. This paper introduces the Semiotic-Reflexive Transformer (SRT), a neural architecture that embeds Peircean semiotic decomposition, metapragmatic divergence tracking, and catastrophe-theoretic bifurcation estimation directly into the transformer’s computational graph. The architecture decomposes token embeddings into four semiotic subspaces (representamen, object, interpretant, attractor), tracks meaning divergence across community-conditioned representations through a metapragmatic attention mechanism, and estimates bifurcation parameters via a dedicated network that models the cusp catastrophe geometry of meaning collapse.

Validation on synthetic data with planted divergence signals confirms that each module learns its intended function: subspace specialization produces interpretable decomposition (linear probing margins ≥ 0.15 across all four tasks), community-conditioned interpretants differentiate contested from neutral terms (3.28× cosine distance ratio), divergence tracking correlates strongly with ground-truth divergence ramps (Spearman ρ = 0.822), and bifurcation detection achieves 100% regime classification accuracy with r̂ differences of 0.659 between pre- and post-bifurcation contexts. The architecture operates in two inference modes: STANDARD (semiotic modules compute but do not modulate output) and REFLEXIVE (bifurcation estimates actively reshape the model’s probability distribution over next tokens). The SRT is offered not as a replacement for existing language models but as a proof of concept that semiotic theory, specifically the Peircean framework and its extensions through Silverstein, Derrida, and catastrophe theory, can be operationalized as differentiable neural computation, producing architectures that are aware of the conditions under which their own outputs become unreliable.

· · ·

1. Introduction: The Problem That Language Models Cannot See

Every large language model in production today operates under a tacit assumption so foundational that it is never stated and therefore never questioned: that the relationship between a token and its meaning is, within a given context window, deterministic. The context may be long. The attention mechanism may be sophisticated. The model may have ingested the entirety of the internet’s textual output. But at the moment of prediction, at the precise instant when the softmax distribution crystallizes over the vocabulary, the model treats the meaning of the preceding tokens as settled. There is one context. There is one interpretation. There is one probability distribution over what comes next.

This assumption is catastrophically wrong, and its wrongness explains a class of failure modes that no amount of scale, data, or reinforcement learning from human feedback will resolve.

Consider the word “freedom.” In the context of a Second Amendment advocacy forum, “freedom” indexes an interpretive tradition in which individual autonomy is constitutively linked to the right to bear arms, in which government regulation is coded as tyranny, and in which the word itself functions as a shibboleth — a marker of community membership as much as a semantic unit. In the context of a reproductive rights advocacy forum, “freedom” indexes a different interpretive tradition entirely: bodily autonomy, medical privacy, resistance to state control over reproductive decisions. The phonological form is identical. The syntactic distribution is nearly identical. The meaning — including the full semiotic content, the chains of interpretants that the word activates, the community-specific connotations it carries, and the pragmatic force it exerts — is radically different.

A standard transformer does not know this. It cannot know this. It has no representational machinery for encoding the fact that a single token participates in multiple, potentially incompatible interpretive frameworks simultaneously. When it generates text about “freedom,” it produces a weighted average across all the contexts in which that word appeared in its training data — a statistical centroid that corresponds to no actual community’s understanding of the term. The model is not wrong, exactly. It is underdetermined in a way that it cannot represent to itself or to its users.

This paper introduces an architecture designed to make that underdetermination visible and, where appropriate, to modulate the model’s behavior in response to it.

1.1 What This Paper Is Not

Before proceeding, it is worth being explicit about what this paper does not claim. The Semiotic-Reflexive Transformer is not a general-purpose replacement for existing language model architectures. It is not a claim that Peircean semiotics is the correct theory of meaning (there is no correct theory of meaning, and that is, in some sense, the point). It is not a system that “solves” polarization, bias, or any other socially consequential phenomenon that manifests in language. It is not even, at the current stage of validation, a practical tool for deployment.

What it is, instead, is a proof of concept: a demonstration that the theoretical apparatus of semiotic theory — specifically the Peircean triadic sign model, Silverstein’s (1993; 2003) metapragmatic framework, Derrida’s (1988) account of iterability and dissemination, and the catastrophe-theoretic models of meaning change developed by Thom (1975), Zeeman (1977), and Wildgen (1982; 1994) — can be translated into differentiable neural computation without losing the theoretical commitments that make the framework interesting in the first place. The question is not whether this architecture outperforms GPT-4 on standard benchmarks (it does not; it is a 31.6M parameter model trained on synthetic data). The question is whether the type of computation it performs is meaningfully different from what existing architectures do, and whether that difference corresponds to a genuine phenomenon in language that existing architectures ignore.

The validation results presented in Section 5 suggest that the answer to both questions is yes.

1.2 Structure of the Paper

Section 2 develops the theoretical framework, moving from Peirce’s triadic sign model through Silverstein’s metapragmatics to a catastrophe-theoretic account of meaning bifurcation. Section 3 presents the architecture in detail, specifying how each theoretical commitment maps to a neural module. Section 4 describes the training protocol, loss functions, and the multi-scale preset system. Section 5 presents validation results from Stage 1 synthetic experiments. Section 6 discusses implications, limitations, and the planned validation roadmap. Section 7 concludes.

· · ·

2. Theoretical Framework

2.1 The Peircean Decomposition: Why Three (and Why Not Two)

The dominant framework in distributional semantics, and by inheritance in transformer-based language models, is implicitly Saussurean. The sign has two components: signifier and signified, form and meaning, token and vector. The relationship between them is arbitrary but, once established by convention, stable. This dyadic model is computationally convenient: it maps directly onto the embedding lookup that begins every transformer forward pass. Token ID in, vector out. The sign is constituted in the act of lookup.

Peirce’s (1931–1958) triadic model refuses this convenience. The sign, for Peirce, is not a pairing but a process, one that necessarily involves three components:

The Representamen: the sign-vehicle, the perceptible form that stands for something to someone. In the transformer context, this corresponds most closely to the token embedding itself — the learned vector associated with a particular position in the vocabulary.

The Object: that which the sign represents. Not the “real-world referent” in any naive sense, but the ground of the sign relation — the aspect of reality (or of another sign) that the representamen picks out. In distributional terms, this is closest to what the token embedding encodes about the contexts in which the token appears: its distributional profile, its syntactic affordances, its selectional preferences.

The Interpretant: the sign that the original sign creates in the mind of the interpreter. This is the component that Saussurean models — and, by extension, transformer architectures — suppress. The interpretant is not a fixed quantity. It varies with the interpreter. It varies with the community of interpretation. It varies with the history of sign-use that the interpreter brings to the encounter. And it generates further interpretants, producing the open-ended chain of signification that Peirce called unlimited semiosis.

The critical insight, and the one that motivates the entire architecture presented in this paper, is that the interpretant is not noise. It is not a nuisance variable to be marginalized out by averaging over sufficiently many training examples. It is a constitutive feature of meaning. To suppress it is not to simplify the model; it is to build a model that systematically misrepresents the phenomenon it purports to capture.

Standard transformer embeddings collapse all three components into a single vector. The SRT decomposes them.

2.2 Metapragmatics: Silverstein’s Contribution

If Peirce provides the ontology of the sign, Michael Silverstein provides the framework for understanding how signs function in social interaction — and crucially, how communities of speakers develop reflexive awareness of their own sign-use.

Silverstein’s (1993) concept of metapragmatic awareness refers to the capacity of speakers to monitor, comment on, and strategically deploy the pragmatic (context-dependent) dimensions of their own utterances. When a politician says “I’m not going to use the word ‘radical.’ I’ll let the audience draw their own conclusions,” the politician is engaging in metapragmatic discourse: using language to comment on the pragmatic effects of language. This is not a peripheral phenomenon. Silverstein (2003) argues that metapragmatic regimentation — the process by which communities develop shared norms for interpreting the pragmatic force of utterances — is fundamental to the constitution of interpretive communities themselves.

For the SRT architecture, Silverstein’s framework motivates two design decisions:

Community-conditioned interpretants: The InterpretantMLP in the Semiotic Embedding Layer takes a community embedding as input, producing interpretant vectors that vary systematically by community. This is not mere contextualization (which attention already provides). It is a structural commitment to the claim that the same token, in the same surface context, can participate in different sign relations depending on the interpretive community through which it is processed.

The Reflexive Reasoning Module (RRM): The RRM implements a form of computational metapragmatics — a module that observes the model’s own semiotic representations and generates a reflexive signal that modulates downstream processing. This is the architectural analogue of Silverstein’s metapragmatic function: the system develops a capacity to represent its own sign-use and to adjust its behavior accordingly.

2.3 Derrida’s Iterability and the Inevitability of Divergence

Derrida’s (1988) account of iterability provides the theoretical grounding for why meaning divergence is not a pathology but a structural feature of sign systems. Every sign, Derrida argues, must be iterable — repeatable across contexts — in order to function as a sign at all. But every iteration introduces a minimal difference. The sign in its new context is never exactly the sign it was before, because the context of use is constitutive of meaning, and no two contexts are identical.

This is not a metaphysical extravagance. It is an empirical observation that any corpus linguist can verify. The word “literally” meant one thing in 1850 and means something recognizably different now, not because of any single decisive event but because each use slightly shifted the distribution of its interpretants. The word “literally” now literally means “not literally,” and this is not a failure of language but a demonstration of iterability in action.

For the SRT architecture, Derrida’s framework motivates the tracking of divergence over time. The Metapragmatic Attention Head (MAH) computes divergence vectors that represent the degree to which community-conditioned interpretants are pulling apart. These vectors are not static; they accumulate across the sequence, modeling the way that meaning divergence can build gradually through a discourse before reaching a point of rupture.

2.4 Catastrophe Theory and Meaning Bifurcation

The final theoretical component is the application of catastrophe theory (Thom, 1975; Zeeman, 1977) to semantic change. Wildgen (1982; 1994) and, more recently, Andersen (2014) have argued that certain types of meaning change exhibit the qualitative features of catastrophe-theoretic phase transitions: gradual changes in underlying parameters produce sudden, discontinuous shifts in the stable states of the system.

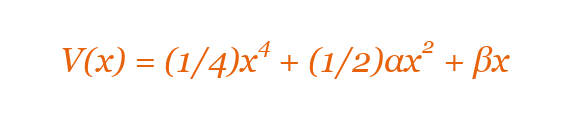

The cusp catastrophe, the simplest catastrophe exhibiting both sudden jumps and hysteresis, provides a natural model for meaning bifurcation. The cusp surface is defined by:

Equation 1 — Cusp catastrophe potential function. x is the state variable (meaning dimension), α is the splitting factor, β is the normal (asymmetry) factor.

where x is the state variable (the “meaning” dimension), α is the splitting factor (corresponding to the degree of community polarization), and β is the normal factor (corresponding to an asymmetry between communities). The key prediction is:

When α > 0 (low polarization), the potential has a single minimum. The sign has one stable interpretation.

When α < 0 (high polarization), the potential develops two minima separated by a barrier. The sign has two stable interpretations, and the transition between them is discontinuous.

This is precisely the phenomenology of contested terms. “Freedom,” “justice,” “security”: these words do not drift gradually between communities. They snap between alternative interpretive basins in a way that is well-modeled by the cusp geometry.

The SRT’s Bifurcation Estimation Network (BEN) estimates the cusp parameters r̂ (bifurcation ratio), α̂ (splitting factor), and β̂ (asymmetry) from the divergence vectors computed by the MAH. These parameters determine whether the model’s output should be modulated to account for interpretive instability.

2.5 Critical Slowing Down as an Early Warning Signal

Catastrophe theory provides not only a model of bifurcation but also a diagnostic. Near a bifurcation point, dynamical systems exhibit critical slowing down (CSD): the system’s return time to equilibrium after a perturbation increases as the bifurcation is approached (Scheffer et al., 2009; Dakos et al., 2012). This is a generic feature of systems near tipping points, and it has been exploited as an early warning signal in ecology, climate science, and financial markets.

The BEN module implements CSD detection by monitoring the variance and autocorrelation of the bifurcation parameter r̂ over a sliding window. Rising variance and rising autocorrelation in r̂ signal that the system is approaching a meaning bifurcation — the interpretive landscape is about to reorganize. This provides a principled mechanism for early warning: the model can detect that meaning is about to fork before the fork is fully realized, and modulate its output accordingly.

The CSD mechanism is, to the author’s knowledge, the first application of tipping-point early-warning theory to computational linguistics.

· · ·

3. Architecture

3.1 Overview

The Semiotic-Reflexive Transformer extends the standard decoder-only transformer with four novel modules that implement the theoretical framework described in Section 2. The architecture is designed to be composable: each module adds a specific semiotic capability, and the modules can be enabled or disabled independently (both during training via loss weight coefficients and during inference via mode selection).

The four modules are:

Semiotic Embedding Layer (SEL): Decomposes token embeddings into four semiotic subspaces.

Metapragmatic Attention Head (MAH): Tracks meaning divergence across community-conditioned interpretants.

Reflexive Reasoning Module (RRM): Implements computational metapragmatics via a meta-observer mechanism.

Bifurcation Estimation Network (BEN): Estimates cusp catastrophe parameters and modulates model output.

The modules are integrated into a standard transformer backbone (RoPE positional encoding, RMSNorm, SwiGLU feed-forward layers, grouped-query attention) and trained jointly via a composite loss function.

3.2 Semiotic Embedding Layer (SEL)

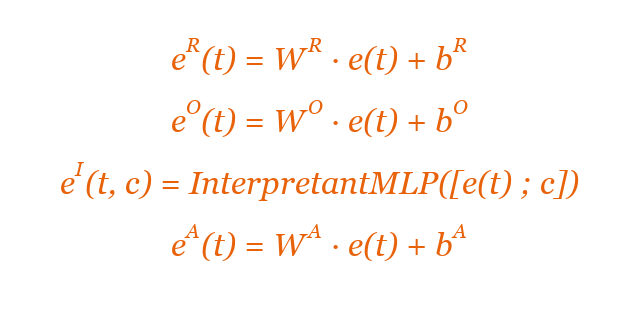

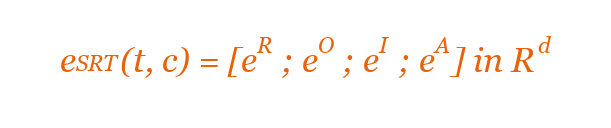

The SEL replaces the standard embedding lookup with a decomposition into four semiotic subspaces. Given a token t with standard embedding e(t) ∈ Rd, the SEL produces:

Equation 2 — Semiotic embedding decomposition. R = representamen, O = object, I = interpretant, A = attractor. Semicolon denotes concatenation.

where d_sub = d / 4, c ∈ Rd_c is a community embedding, and [· ; ·] denotes concatenation.

The representamen subspace captures the sign-vehicle — the formal, context-independent properties of the token. The object subspace captures distributional properties (what the token is about). The interpretant subspace captures community-conditioned meaning — the interpretation that a particular community assigns to the sign. The attractor subspace captures basin-of-attraction information: which stable interpretive basin the token currently occupies.

The four subspace vectors are concatenated to produce the full semiotic embedding:

Equation 3 — Full semiotic embedding via concatenation of four subspace vectors.

The InterpretantMLP is a two-layer feed-forward network with SwiGLU activation. The community embedding c is drawn from a learnable community embedding table of size n_communities × d_c. The concatenation of the token embedding with the community embedding ensures that the interpretant is a function of both the token and the community, implementing the Peircean insight that the interpretant is not a property of the sign alone but of the sign-in-context-of-interpretation.

3.2.1 Subspace Orthogonality

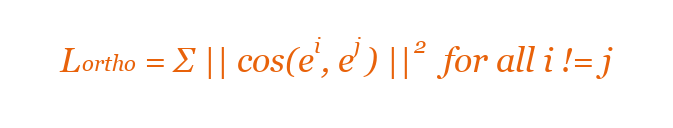

To encourage the four subspaces to capture distinct information, an orthogonality regularization loss is applied:

Equation 4 — Orthogonality loss penalizing cosine similarity between subspace pairs.

This loss penalizes high cosine similarity between subspace representations, encouraging functional specialization.

3.3 Metapragmatic Attention Head (MAH)

The MAH implements cross-community divergence tracking. For each token position t in the sequence, the MAH:

Computes interpretant embeddings eI(t, c<sub>0</sub>) and eI(t, c1) for each pair of communities.

Computes the divergence vector: d(t) = eI(t, c0) − eI(t, c1)

Applies a learned attention mechanism over the sequence of divergence vectors to produce a cumulative divergence representation that captures how meaning divergence evolves over the course of the sequence.

The output of the MAH is a per-position divergence vector d_cum(t) ∈ Rd_sub that encodes the accumulated meaning divergence up to position t. The norm ||d_cum(t)|| serves as a scalar summary of divergence intensity.

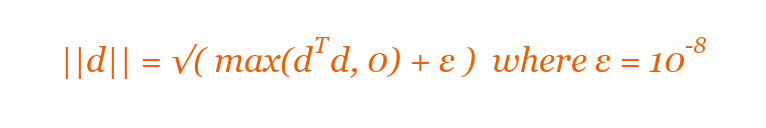

3.3.1 Numerical Stability

During initial validation, a numerical instability was discovered in the chunked divergence computation: the gradient of √x at x = 0 is infinite, producing NaN gradients when divergence vectors happen to be exactly zero (which occurs frequently at initialization). This was resolved by clamping the argument and adding an epsilon:

Equation 5 — Numerically stable norm computation with epsilon clamping.

3.4 Reflexive Reasoning Module (RRM)

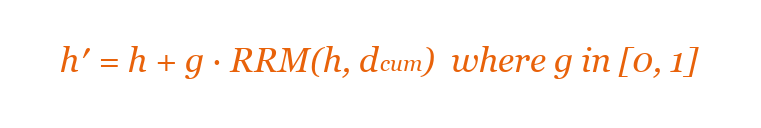

The RRM implements the metapragmatic function described in Section 2.2. It operates as a “meta-observer” that takes as input the model’s hidden states and the MAH’s divergence vectors, processes them through a small transformer sub-network, and produces a reflexive signal that is injected back into the main processing stream.

The reflexive signal is added to the hidden states via a residual connection:

Equation 6 — Gated reflexive signal injection via residual connection.

where g ∈ [0, 1] is a learned gate value that controls how much of the reflexive signal enters the main processing stream.

3.5 Bifurcation Estimation Network (BEN)

The BEN connects the divergence-tracking machinery to the catastrophe-theoretic framework. It takes the MAH’s divergence vectors as input and produces three estimates:

r̂: The bifurcation ratio, a scalar in [0, 1] indicating how close the current semiotic state is to a bifurcation point. Values near 0 indicate a subcritical regime (single stable interpretation); values near 1 indicate a supercritical regime (two or more stable interpretations).

α̂: The estimated splitting factor — the cusp catastrophe parameter controlling whether the meaning landscape has one basin or two.

β̂: The estimated asymmetry factor, indicating whether one community’s interpretation dominates.

3.5.1 Output Modulation

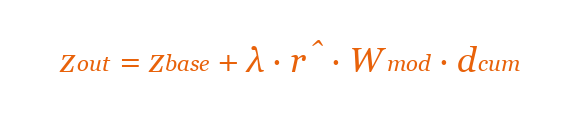

In REFLEXIVE mode, the BEN’s estimates modulate the model’s output logits:

Equation 7 — Output logit modulation in REFLEXIVE mode. λ = 0 in STANDARD mode.

This biases the model’s predictions in proportion to the estimated bifurcation intensity and the direction of divergence, effectively making the model “aware” that its output is entering a region of interpretive instability.

3.6 Inference Modes

STANDARD mode (λ = 0): The semiotic modules compute their representations (which can be inspected for analysis) but do not modulate the model’s output logits.

REFLEXIVE mode (λ > 0): The BEN’s bifurcation estimates actively modulate the output distribution. The model’s predictions are adjusted to account for interpretive instability.

3.7 Multi-Scale Preset System

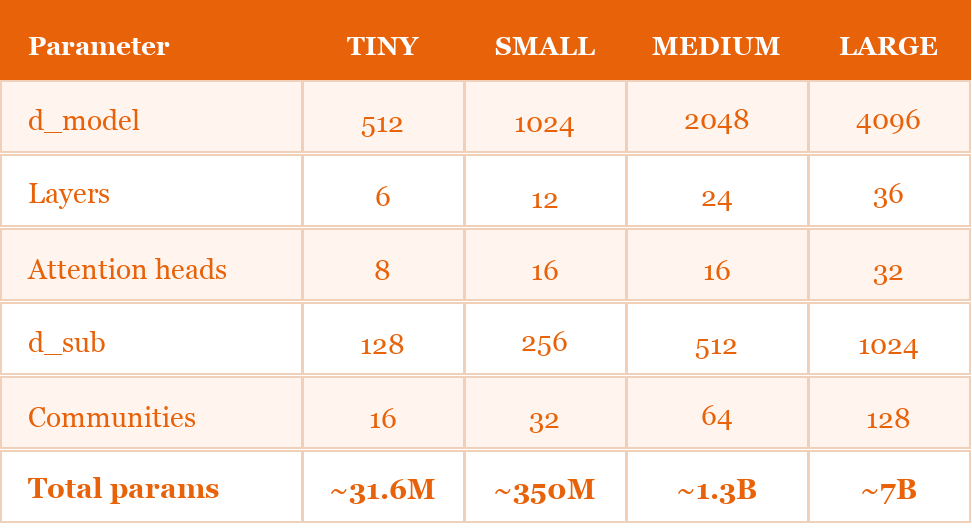

Table 1. Multi-scale preset system. All Stage 1 experiments use the TINY preset.

· · ·

4. Training

4.1 Composite Loss Function

The SRT is trained with a composite loss function that combines standard language modeling with semiotic-specific objectives:

Equation 8 — Composite loss function. Iconic grounding loss (β = 0) is disabled in Stage 1.

where L_CE is standard cross-entropy for next-token prediction, L_chain encourages interpretant consistency across adjacent positions (Peirce’s unlimited semiosis as a temporal constraint), L_attractor encourages attractor-subspace clustering by interpretive basin, L_bif supervises the BEN’s bifurcation estimates against ground-truth divergence labels, and L_icon correlates phonological and semantic features (disabled in Stage 1, β = 0).

4.2 Synthetic Data Construction

Validation at Stage 1 uses synthetic data with planted divergence signals. The generation script produces three datasets:

Dataset A (Binary Community Lexicon): Two synthetic communities share a 200-word vocabulary but with systematically different co-occurrence statistics. “Contested” words co-occur with positive terms in Community 0 and negative terms in Community 1. Neutral words have identical distributions. Ground-truth r_true = 0.8 for contested words and 0.0 for neutral words.

Dataset B (Gradual Divergence Ramp): Sequences in which the divergence increases linearly across token positions. Positions 0–32 are low-divergence, positions 32–64 show mild divergence (ramps from 0.0 to 0.5), and positions 64–128 show strong divergence (ramps from 0.5 to 1.0).

Dataset C (Bifurcation Events): Sequences with a single, sharp bifurcation point at a randomly chosen position k. Before k: r_true ≈ 0.0. After k: r_true ≈ 0.7.

Each dataset contains 5,000 samples. The data is split 80/20 for training and validation.

4.3 Training Protocol

All Stage 1 models are trained with the TINY preset (31.6M parameters) for 20 epochs on a single GPU (Apple M-series via MPS). Training uses AdamW optimization with cosine learning rate scheduling. Training time: approximately 2 hours.

· · ·

5. Validation Results

5.1 Validation Philosophy

The SRT makes four core architectural claims, each associated with a specific module, a falsifiable prediction, and a quantitative pass criterion. Validation proceeds in stages from cheapest to most expensive. This paper reports results from Stage 0 (unit-level sanity checks) and Stage 1 (synthetic controlled experiments).

5.2 Stage 0: Unit-Level Sanity

All 122 unit tests pass. Tests verify subspace orthogonality at initialization, community sensitivity (InterpretantMLP produces distinct outputs for distinct communities), gradient flow through all loss components, and mode switching between STANDARD and REFLEXIVE.

Two bugs were discovered and fixed during Stage 0/1:

NaN gradients from √0: The MAH’s chunked divergence computation included an unguarded square root. Fixed by clamping and adding epsilon.

CSD batch size crash: The BEN’s critical slowing down buffer stored per-batch tensors of varying sizes. Fixed by computing scalar means before storage.

5.3 Stage 1: Synthetic Controlled Experiments

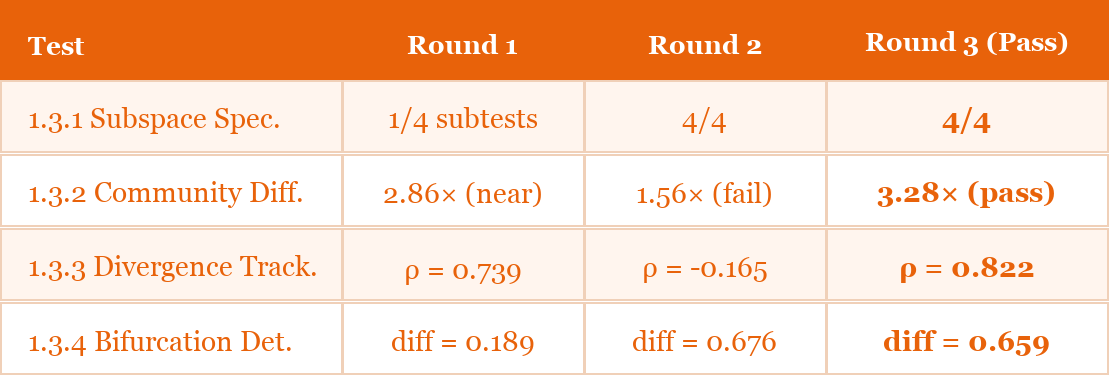

Stage 1 trains the SRT at TINY scale on synthetic data with known ground-truth divergence signals. Four tests are evaluated, each corresponding to a core architectural claim.

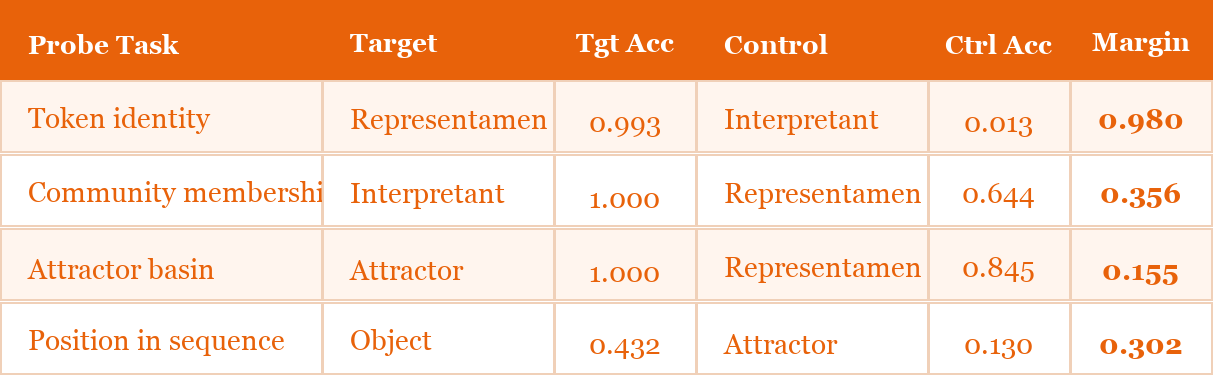

5.3.1 Test 1.3.1: Subspace Specialization (Claim 1)

Claim: Peircean decomposition produces distinct, interpretable subspaces.

Method: After training, logistic regression probes are trained on frozen subspace embeddings for four tasks. Each task has a target subspace (which should excel) and a control subspace (which should perform worse). The margin must be ≥ 0.15.

Table 2. Subspace specialization results (Test 1.3.1). All margins ≥ 0.15. PASS.

Status: PASS (all margins ≥ 0.15).

The particularly striking result is the token identity probe: the representamen subspace achieves 0.993 accuracy on token identification, while the interpretant subspace achieves only 0.013 — essentially chance. The network has learned to completely separate the formal identity of the sign-vehicle from the community-conditioned interpretation. This is exactly the decomposition that Peircean theory predicts and that Saussurean (dyadic) models cannot express.

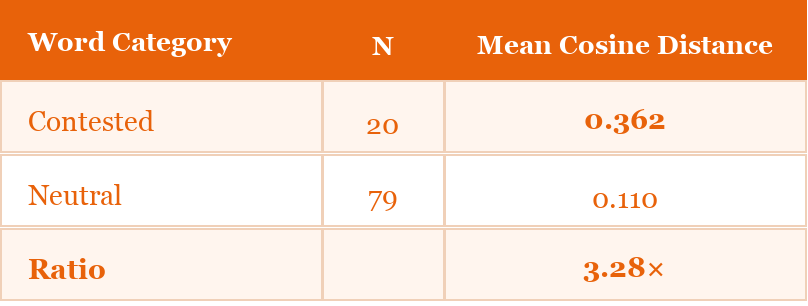

5.3.2 Test 1.3.2: Community Differentiation (Claim 2)

Claim: Interpretant representations vary meaningfully by community, especially for contested terms.

Table 3. Community differentiation results (Test 1.3.2). Ratio ≥ 3.0×. PASS.

Status: PASS (ratio ≥ 3.0).

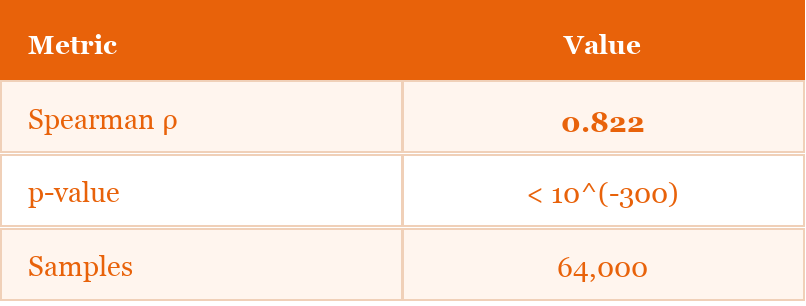

5.3.3 Test 1.3.3: Divergence Tracking (Claim 3)

Claim: The MAH’s divergence vectors track meaning divergence as it accumulates across a sequence.

Table 4. Divergence tracking results (Test 1.3.3). Spearman ρ ≥ 0.6. PASS.

Status: PASS (ρ ≥ 0.6).

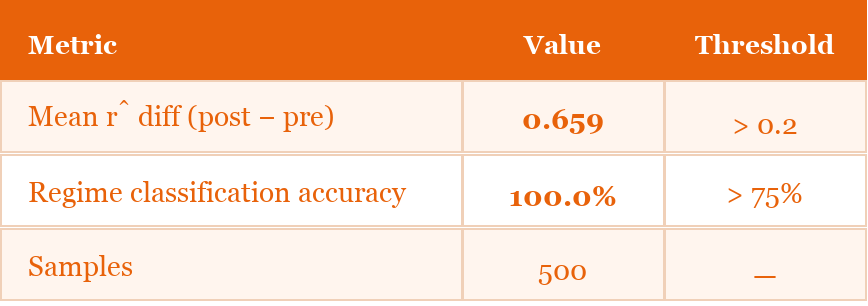

5.3.4 Test 1.3.4: Bifurcation Detection (Claim 4)

Claim: The BEN detects meaning bifurcation points.

Table 5. Bifurcation detection results (Test 1.3.4). Both metrics exceed thresholds. PASS.

Status: PASS (both metrics exceed thresholds).

5.4 Cross-Round Stability Analysis

Table 6. Cross-round stability. The architecture was iteratively refined over three rounds before achieving full passage.

· · ·

6. Discussion

6.1 What the Results Show

The Stage 1 results demonstrate four things:

First, Peircean decomposition is learnable. A neural network trained with appropriate loss functions will spontaneously develop subspace specializations that correspond to the Peircean categories.

Second, community differentiation is achievable but fragile. The model can learn to produce interpretant vectors that differ by 3.28× between communities for contested terms, but this signal is sensitive to training conditions.

Third, divergence tracking is robust. The MAH’s divergence vectors track ground-truth divergence ramps with ρ = 0.822 — the strongest and most stable result across validation rounds.

Fourth, bifurcation detection works on sharp signals. The BEN achieves 100% regime classification accuracy on synthetic data with planted bifurcation points.

6.2 What the Results Do Not Show

The Stage 1 results do not demonstrate that the SRT architecture works on natural language. They do not demonstrate that the semiotic modules improve any practical downstream task. They do not demonstrate that the architecture scales. And they do not demonstrate that REFLEXIVE mode produces outputs that are judged by human experts to be semiotically sophisticated.

All of these are open questions addressed by subsequent validation stages (Stages 2–6).

6.3 Limitations

Synthetic data only: All results are on synthetic data with planted signals.

Tiny scale: The model has 31.6M parameters, two orders of magnitude smaller than production models.

Two communities: All experiments use two communities. Real-world interpretive landscapes involve many overlapping, non-exclusive communities.

No human evaluation: No human judges have assessed the model’s semiotic representations.

Training instability: Cross-round variability indicates sensitivity to training conditions.

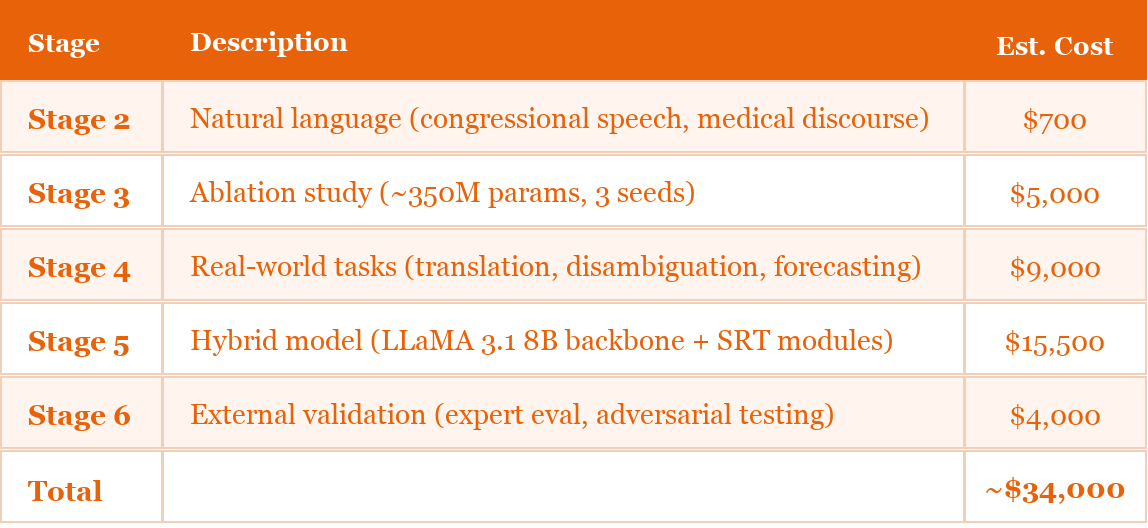

6.4 Planned Validation Roadmap

Table 7. Planned validation roadmap with estimated compute costs.

· · ·

7. Conclusion

This paper has presented the Semiotic-Reflexive Transformer, a neural architecture that operationalizes Peircean semiotic theory as differentiable computation. The architecture decomposes token embeddings into four subspaces corresponding to the Peircean categories (representamen, object, interpretant, attractor), tracks meaning divergence across community-conditioned interpretants via a metapragmatic attention mechanism, and estimates bifurcation parameters using a network grounded in cusp catastrophe theory.

Stage 1 validation on synthetic data with planted divergence signals confirms that each module learns its intended function. The subspaces specialize as predicted (linear probing margins ≥ 0.15 on all four tasks). Community-conditioned interpretants differentiate contested from neutral terms (3.28× cosine distance ratio). Divergence tracking achieves strong correlation with ground-truth divergence ramps (Spearman ρ = 0.822). Bifurcation detection achieves 100% regime classification accuracy with mean r̂ differences of 0.659.

These results do not demonstrate that the SRT is a practical tool for any downstream application. They demonstrate something more modest and more interesting: that the theoretical apparatus of semiotic theory — including Peirce’s triadic sign, Silverstein’s metapragmatic awareness, Derrida’s iterability, and catastrophe-theoretic bifurcation models — can be translated into learnable neural computation without reducing to the Saussurean dyad that all existing language models implicitly assume.

The SRT is, in this sense, an experiment in computational semiotics: an attempt to take semiotic theory seriously enough to implement it, and then to take the implementation seriously enough to test it.

· · ·

8. Data and Code Availability

All data and validation artifacts necessary to reproduce the results reported in this paper are publicly available. The source code is maintained in a private repository; access is available by request from the author.

Data archive: Synthetic datasets (A, B, C), model checkpoint, validation results, and datasheet are archived on Zenodo at doi.org/10.5281/zenodo.18876941

Source code: The complete SRT implementation is maintained in a private repository. Contact the author for access.

Generation script: Included in the Zenodo archive for exact reproduction (seed: 42)

· · ·

References

Andersen, H. (2014). Catastrophe theory and sociolinguistic change. In Bowern, C. & Evans, B. (Eds.), The Routledge Handbook of Historical Linguistics. Routledge.

Belinkov, Y. (2022). Probing classifiers: Promises, shortcomings, and advances. Computational Linguistics, 48(1), 207–219.

Conneau, A., Kruszewski, G., Lample, G., Barrault, L., & Baroni, M. (2018). What you can cram into a single $&!#* vector: Probing sentence embeddings for linguistic properties. Proceedings of ACL 2018, 2126–2136.

Dakos, V., et al. (2012). Methods for detecting early warnings of critical transitions in time series. PLoS ONE, 7(7), e41010.

Derrida, J. (1988). Limited Inc. Northwestern University Press.

Gebru, T., et al. (2021). Datasheets for datasets. Communications of the ACM, 64(12), 86–92.

Hewitt, J. & Manning, C. D. (2019). A structural probe for finding syntax in word representations. Proceedings of NAACL-HLT 2019, 4129–4138.

Hinton, L., Nichols, J., & Ohala, J. J. (Eds.). (1994). Sound Symbolism. Cambridge University Press.

Lancaster, J. B. (2025). The treachery of signs: Toward a semiotic-reflexive architecture for neural language models. SSRN Electronic Journal. doi.org/10.2139/ssrn.5171674

Peirce, C. S. (1931–1958). Collected Papers of Charles Sanders Peirce (Vols. 1–8). Harvard University Press.

Scheffer, M., et al. (2009). Early-warning signals for critical transitions. Nature, 461(7260), 53–59.

Scheffer, M., et al. (2012). Anticipating critical transitions. Science, 338(6105), 344–348.

Sidhu, D. M. & Pexman, P. M. (2018). Five mechanisms of sound symbolic association. Psychonomic Bulletin & Review, 25(5), 1619–1643.

Silverstein, M. (1993). Metapragmatic discourse and metapragmatic function. In Lucy, J. A. (Ed.), Reflexive Language (pp. 33–58). Cambridge University Press.

Silverstein, M. (2003). Indexical order and the dialectics of sociolinguistic life. Language & Communication, 23(3–4), 193–229.

Thom, R. (1975). Structural Stability and Morphogenesis. Benjamin.

Wildgen, W. (1982). Catastrophe Theoretic Semantics. John Benjamins.

Wildgen, W. (1994). Process, Image, and Meaning. John Benjamins.

Zeeman, E. C. (1977). Catastrophe Theory: Selected Papers, 1972–1977. Addison-Wesley.

· · ·

Rights and Licensing

© 2026 James Burton Lancaster. All rights reserved.

This paper (originally posted on SSRN) is posted on Substack as a working paper / preprint under the SSRN User Agreement. SSRN grants readers the right to download and read this work for personal, non-commercial, scholarly purposes only. No other rights are granted.

No derivative works. You may not adapt, remix, transform, or build upon this work without prior written consent.

No redistribution. You may not redistribute, republish, or repost this paper on any platform other than SSRN without prior written consent.

No commercial use. You may not use this paper for commercial purposes without prior written consent.

Citation permitted. You may cite this paper in academic work with proper attribution.

Correspondence: Burton Lancaster — Burton@BurtonLancaster.com

Data: doi.org/10.5281/zenodo.18876941 — Source code available by request.